Bat monitoring in New Zealand has a data problem. Not a lack of it, but quite the opposite. A single AR5 acoustic detector can generate hundreds of individual recordings each night. Multiply that across multiple sites and a full field season, and you quickly end up with a dataset that no team has the time to manually review. Often meaning critical conservation data ends up sitting on hard drives, unanalysed and unused for months.

Alato Lab is the only AI built in New Zealand, for New Zealand Pekapeka (bat) species (long-tailed bat, short-tailed bat). It’s designed specifically to work with the AR4 and AR5, the most widely used bat detectors in the country, originally developed by DOC and now built here by us, Alato Lab automates processing, classifying, and reporting on NZ bat calls at a scale that makes manual review impractical.

This article takes you under the hood: how Alato Lab sees and interprets bat calls, how the model was built, and why having an AI purpose-built for NZ bats matters for serious bioacoustics work.

Why Does Bat Monitoring Need Automation?

Unlike bird recorders, most bat detectors work on a triggered basis. They listen for calls within the right frequency range and only record when activity is detected. In New Zealand we have two bat species, the Long-Tailed and Short-Tailed bats which have different calls mostly between 24kHz and 60kHz. On a productive night, a single unit might generate hundreds of individual recordings, each typically 15 seconds long. The volume adds up fast.

Manual review of this volume is painstaking, specialised work. For many organisations, the effort required for post-field analysis becomes a deterrent to monitoring in the first place. Automated detection changes that equation by offering two things manual review cannot:

- Consistency: the same criteria applied to all files, whether its file one or file ten thousand. AI doesn’t get tired or distracted.

- Repeatability: results that are directly comparable across projects, sites, and seasons.

In practice, Alato Lab aims to reduce analysis time by upwards of 85%. What previously took a week can be done in half a day or less.

How Does Alato Lab “See” a Bat Call?

The first thing to understand is that Alato Lab doesn’t “listen” to bat calls. It looks at them.

When a bat call is processed, it’s converted into a spectrogram, an image where the horizontal axis is time and the vertical axis is frequency. Rendered this way, a bat call becomes a distinctive shape: a sweep, a pulse, a curve that is characteristic of the species that produced it. To Alato Lab, that shape is the bat call. Not a sound, but a visual signature.

Why use images instead of audio for bat detections?

Machine learning models are built for pattern recognition, and recognising patterns in a static image is far simpler than analysing a constantly changing audio signal. A spectrogram converts sound into a visual form the AI can interpret far more efficiently, turning the sweep of a Long-tailed bat call into a shape the model recognises in an instant.

The AR5 and AR4 are unique among bat detectors because they save each bat recording as a bitmap image instead of audio. A 15-second recording at 192kHz is about 11MB as a WAV file, but only 100KB as a bitmap, roughly 100 times smaller. At bat monitoring scale, that difference makes the work practical instead of unworkable.

What Data Was the Model Trained On?

A machine learning model is only as good as the data it was trained on. This is where many AI projects fall short, with models trained on clean, lab-quality recordings that bear little resemblance to real field data.

We went the other way. Our training dataset comprises over 50,000 tagged bitmap files drawn from real New Zealand field recordings from years of monitoring. The AR4, originally developed by the Department of Conservation, has been New Zealand’s most widely used bat detector for many years. Taking over its production in late 2024 gave Alato access to an extensive archive of real-world data from diverse habitats, seasons, and acoustic conditions across the country. This is data that would have been extremely difficult to replicate from scratch.

That means the model has been trained on the full range of what NZ field recordings actually look like:

- Rain noise and wind interference

- Overlapping and partial calls

- Irregular signal from distant or fast-moving bats

- Varied habitat acoustics from dense forest to open farmland

What does “building an AI model” actually mean?

Most AI tools are not built from scratch and for good reason, building AI models is seriously complex and time consuming. The same can be said for Alato lab where we started with a powerful pre-trained image classification network, one already trained on billions of images, and retrained it on our bat call dataset. This process is called transfer learning. Think of it as directing a world-class visual pattern recognition expert toward a specific domain. The underlying capability is already there; the training is what makes it New Zealand bat specific detection software.

How Alato Lab Ensures Accurate Automated Bat Detection

To maximise accuracy, Alato Lab uses two separate models that ask different questions in sequence:

- Step 1 - Detection: “Is there a bat call in this recording?” The model returns Bat, Possible Bat, or Non-bat.

- Step 2 - Identification: “If it is a bat, which species?” The model returns Long-tailed or Short-tailed.

The separation is deliberate. In conservation data, a false identification is worse than no identification at all. A misattributed species record doesn’t just introduce noise into a dataset; it can actively mislead population assessments and monitoring outcomes. So when the species model isn’t confident, it doesn’t guess. It returns “Possible Bat” and flags the record for review. Caution is a feature, not a limitation.

How Does the Model Find Bat Calls Within a Recording?

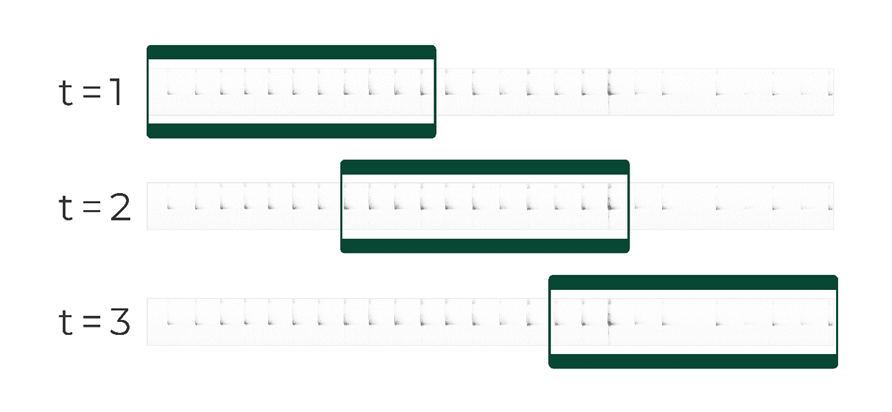

A recording might be 15 seconds long, but a bat call may last only a fraction of a second. The challenge is finding that call reliably, every time. Alato Lab does this using a sliding window approach.

Think of it like reading a long scroll through a small magnifying glass, sliding it from left to right. Each position reveals a narrow slice of the recording. The model examines each slice, looking for the shape of a bat call. The windows overlap as they move, so if a call falls across the boundary between two windows, at least one will capture it fully. Without overlap, calls at the edges could be split and missed.

For every window, the model also analyses three versions simultaneously:

- The raw image

- A smoothed version that emphasises the call’s overall shape

- A detail-enhanced version that sharpens fine features

This means the model reads both broad patterns and fine detail at the same time, like stepping back to see the shape before zooming in. By scanning the entire recording this way, Alato Lab ensures no call goes undetected, no matter where it appears.

Sliding window diagram. The model is looking at the same recording multiple times to look for bats.

How Alato Lab Shows Its Certainty

Behind every detection is a confidence score that tells us how certain the model is. Rather than showing raw numbers, Alato Lab translates these into plain labels that are easy to act on.

If the model is highly confident it has found a Long-tailed bat, it labels it “Long-tailed bat”. If it’s less certain, it labels it “Possible Long-tailed bat”. The same applies to Short-tailed bats. This means you can trust the confident detections for your reporting and focus your review time on the “possibles”, a much shorter list you can work through directly in the app.

When the model scans a recording, it looks for the single best window, the moment where it’s most certain it’s seen a bat call. A 95% confidence score means the model found at least one perfect or near-perfect match, even if the rest of the file is silent or noisy.

Our thresholds are set conservatively:

- High Confidence (95%+): The model is certain. These are labelled as the species and can go straight into your statistics.

- Low Confidence: The model found something but isn’t sure enough to commit. These are labelled as “Possible” and become your review list.

Setting a high bar for what counts as “certain” means you’ll have some “possibles” to review, but that’s far better than missing real bat activity. In conservation monitoring, it’s always better to flag something for a quick human check than to let it slip through unnoticed.

Where Does AI End and Human Judgement Begin?

There’s a temptation, when introducing AI into scientific workflows, to frame it as either fully autonomous or fundamentally unreliable. Neither is accurate or useful.

Alato Lab is not designed to replace expert judgement. It’s designed to protect your time so that expert judgement is applied where it actually makes a difference. The model handles the high-confidence detections: the clear-cut, unambiguous calls that make up the majority of a typical dataset. What remains for the human analyst is a focused shortlist of recordings that genuinely need attention.

In practice, the workflow looks like this:

- High Confidence detections: export directly for statistics and reporting.

- Low Confidence detections: your expert review queue, curated automatically.

- Non-bat recordings: excluded from reporting with confidence.

This is what “human-in-the-loop” means in practice. It’s not a workaround for a model that isn’t quite good enough. It’s the correct approach for any scientific tool that needs to be both fast and trustworthy.

Built for the Field, Not the Lab

The AR5 was built to capture New Zealand bat activity as efficiently and reliably as possible. Alato Lab was built to make sense of what it captures. Together, they form an end-to-end workflow, designed by people who work in this field, trained on data from the environments you monitor, and grounded in how good ecological analysis actually works.

Our objective is to scale bat monitoring through faster, more consistent analysis by automating time-intensive processing. This reduces manual workload while maintaining quality, so ecologists can focus on high-impact fieldwork and conservation outcomes.

Alato Lab is available now. If you’re working with New Zealand bat data and want to see how it performs on your recordings, get in touch.

For useful resources about Pekapeka (bat) monitoring and handling DOC have great resources available.

.png)